Last month, a fintech CTO I work with asked their legal team a straightforward question: "Are our AI agents high-risk under the EU AI Act?" The lawyers came back three weeks later with a 40-page memo that boiled down to "probably, but it depends." Meanwhile, the August 2, 2026 compliance deadline kept getting closer.

That story captures where most companies are right now. According to an appliedAI study of 106 enterprise AI systems, 40% have unclear risk classifications under the Act. And with the Digital Omnibus package muddying the timeline (more on that in a moment), engineering teams are stuck between two bad options: over-invest in compliance that might get delayed, or under-prepare and risk fines of up to EUR 35 million.

This article is the technical reference I wish that CTO had received instead of the 40-page memo.

What is the Digital Omnibus, and should you wait for it?

Before getting into the compliance checklist, we need to address the elephant in the room. The European Commission proposed the "Digital Omnibus" package in November 2025, and it would push back the August 2026 deadline for high-risk AI systems.

Here is where things stand as of April 15, 2026:

| Actor | Position | Proposed new deadline |

|---|---|---|

| European Commission | Proposed delay | Left flexible |

| Council (13 March 2026) | Favours fixed deadlines | December 2, 2027 for Annex III systems |

| Parliament (26 March 2026) | Aligned with Council | December 2, 2027 (and removed Commission's ability to bring it forward) |

| Current status | Trilogues underway | Second trilogue scheduled for April 28, 2026 |

Both co-legislators want the delay. That is a strong signal. But the original August 2, 2026 deadline is still the law.

My recommendation: build your compliance infrastructure now. If the deadline shifts to December 2027, you get extra runway for testing and refinement. If it doesn't shift, you are covered. The worst outcome is starting compliance work in Q3 2026 after assuming a delay that never materializes.

When does an AI agent become "high-risk"?

The Act organizes AI systems into four risk categories: unacceptable (banned), high-risk (heavily regulated), limited risk (transparency obligations), and minimal risk (no obligations). AI agents land in the high-risk bucket when they operate in one of eight domains listed in Annex III AND their function goes beyond narrow procedural tasks.

The eight Annex III categories, mapped to common AI agent use cases:

| Category | AI agent examples |

|---|---|

| Biometrics | Identity verification agents, facial recognition |

| Critical infrastructure | AI agents managing power grids, digital infrastructure safety |

| Education | Admissions scoring agents, exam proctoring |

| Employment | Resume screening, recruitment automation, performance evaluation |

| Essential services | Credit scoring agents, healthcare triage, insurance pricing |

| Law enforcement | Predictive policing, evidence evaluation |

| Migration and border control | Document verification, visa processing |

| Justice and democratic processes | Legal research assistants, dispute resolution |

The practical test: does your AI agent determine credit scores, screen job applicants, handle regulatory reporting, make infrastructure safety decisions, triage emergency calls, price insurance, or evaluate healthcare eligibility? If yes, it is high-risk.

Side note: the Act explicitly carves out agents that perform only "narrow procedural tasks" or that do not replace or influence human assessment. So a chatbot that answers FAQs about your insurance product is probably fine. An agent that decides claim amounts is not.

What are the core compliance requirements for high-risk AI agents?

Articles 9 through 15 define the technical obligations. I will walk through each one with what it means in practice for an engineering team building or deploying AI agents.

Article 9: Risk management system

You need a continuous, iterative risk management process that runs across the entire lifecycle of the system. This is not a one-time risk assessment before launch. It means identifying known and foreseeable risks, estimating risks that may emerge during operation, adopting mitigation measures, and testing to ensure consistent performance.

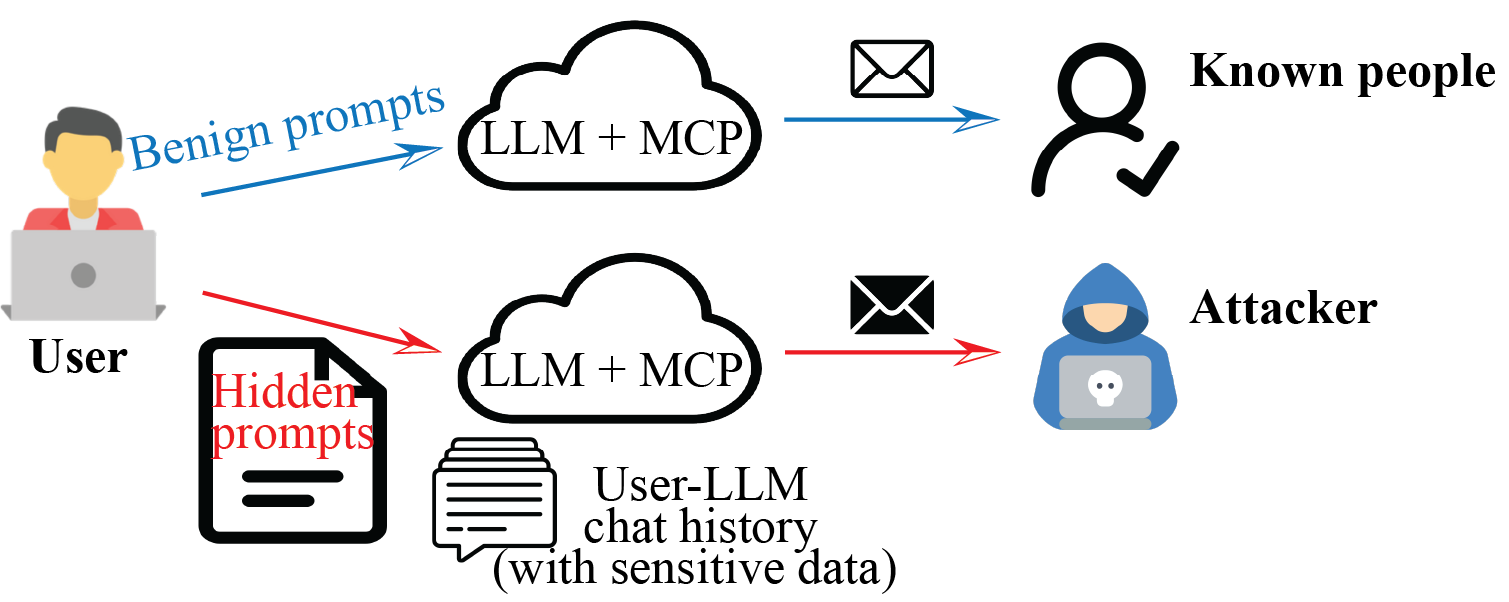

For AI agents, this translates to: what happens when the agent encounters an input outside its training distribution? What if the API it calls returns unexpected data? What if a prompt injection attempts to override its instructions? Each scenario needs a documented risk assessment and a mitigation strategy.

Article 10: Data governance

Training, validation, and testing datasets must be relevant, representative, and as error-free as possible. You need bias detection measures in place and must identify data gaps. The Act does allow processing of special category personal data (the GDPR Article 9 categories: race, health, political opinions) for bias detection, but only under strict safeguards.

Article 11 + Annex IV: Technical documentation

Nine mandatory sections, drawn up before the system goes to market. This is the heaviest documentation lift:

- General description (purpose, provider, version history)

- Development and design specifications (algorithms, key design choices, rationale)

- Monitoring and control (capabilities, limitations, accuracy metrics per user group)

- Performance metrics (appropriateness for the specific system)

- Risk management system (per Article 9)

- Lifecycle changes (every modification documented)

- Applied standards (harmonised standards or alternative solutions)

- EU Declaration of Conformity (per Article 47)

- Post-market monitoring plan (per Article 72)

Worth knowing: SMEs and micro-enterprises can use a simplified documentation form. If you are a 30-person startup deploying a single high-risk agent, you are not held to the same documentation standard as a bank with 200 AI systems.

Article 12: Record-keeping and logging

This is the one that keeps engineering teams up at night. High-risk AI systems must support automatic recording of events over the system's lifetime. Logs must be retained for a minimum of 6 months, and longer if sector-specific law requires it.

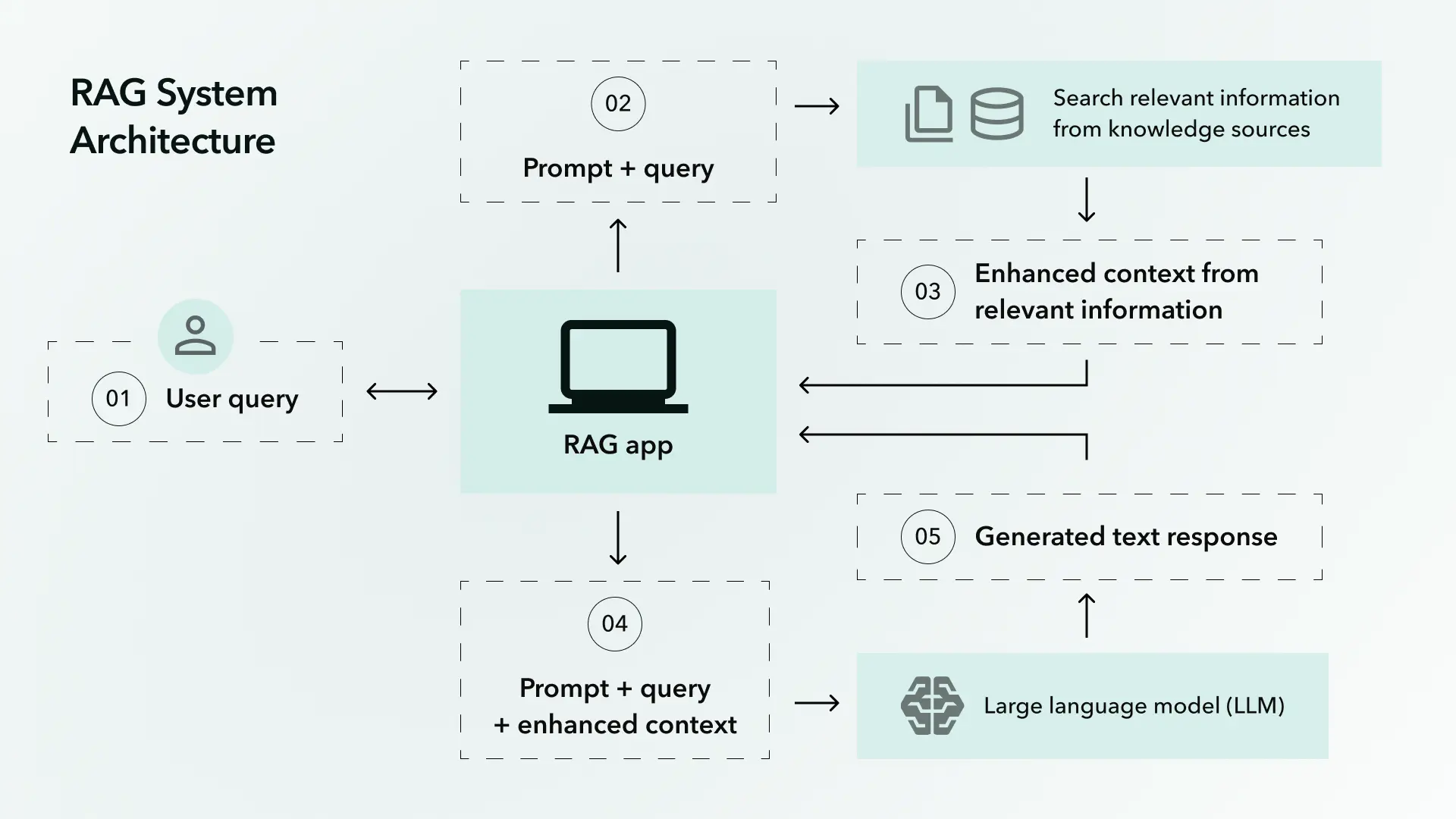

I will cover the specifics of what to log in the next section because this deserves its own treatment. For the deeper architectural patterns — schemas, retention, tamper-evidence — see our AI audit trail architecture guide for HIPAA, GDPR, and DORA.

Article 13: Transparency

Deployers (the companies using the AI system, as opposed to providers who build it) must be able to understand the system's capabilities and limitations, interpret its output, and access its logs. Instructions for use must include provider identity, performance metrics, known limitations, human oversight measures, and maintenance requirements.

Article 14: Human oversight

The system must be designed with interface tools that let a human overseer:

- Understand the system's capacities and limitations

- Monitor its operation and detect anomalies

- Remain aware of automation bias (the tendency to over-rely on AI output)

- Interpret the system's output correctly

- Decide not to use the system or disregard its output in any situation

- Intervene or interrupt the system through a stop mechanism

For biometric identification systems, every decision must be verified by at least two qualified individuals.

What exactly must an AI agent log under Article 12?

Article 12 requires logging events that help identify risk situations, support post-market monitoring, and enable operational oversight. But the regulation does not hand you a JSON schema. So here is what the emerging standards (prEN 18229-1, ISO/IEC DIS 24970) and current best practices point to.

Minimum fields per agent action:

| Field | Purpose | Example |

|---|---|---|

| Timestamp | Chronological ordering, retention enforcement | 2026-04-15T14:23:07Z |

| User identity | Accountability, access control audit | user_id: cto-4291 |

| Full prompt text | Reproduce the decision context | The complete input |

| Knowledge sources retrieved | Verify factual grounding | Specific document IDs and sections |

| Response generated | Audit the output | The complete agent response |

| Tool calls executed | Trace external actions | API name, parameters, return values |

| Decision confidence score | Route low-confidence cases to humans | 0.73 |

| Model version | Reproduce behavior | gpt-4-turbo-2026-03-15 |

| Prompt/policy version | Track configuration changes | v2.4.1 |

The infrastructure matters as much as the data. Logs must be immutable and centralized, not scattered across application-level logging. They need to be tied to identity and queryable by security and compliance teams. OpenTelemetry (OTel) has become the de facto standard for vendor-neutral observability, and for good reason: it gives you a consistent telemetry pipeline that works across cloud providers.

Every action should be traceable to a specific model version, prompt version, policy configuration, and human owner. If an auditor asks "why did the agent deny this credit application on March 3rd," you should be able to reconstruct the exact state of the system at that moment.

How should human oversight work for autonomous AI agents?

Article 14 requires human oversight, but it does not prescribe a single architecture. Three patterns have emerged, each appropriate for different risk levels:

Human-in-the-loop (HITL): A human approves every high-stakes decision before the agent executes it. The agent recommends; the human acts. This is the safest pattern and the right choice for credit decisions, healthcare triage, and employment screening where individual outcomes carry serious consequences.

Human-on-the-loop (HOTL): The agent operates autonomously, but a human monitors in real-time and can intervene at any point. Think of it like a self-driving car with a safety driver. Good for agents handling high volumes of medium-risk decisions, like insurance claim categorization.

Human-over-the-loop: A human sets the parameters, reviews outcomes periodically, and adjusts the agent's boundaries. The agent has defined autonomy within guardrails. This works for lower-risk Annex III applications where the volume makes per-decision review impractical.

Regardless of which pattern you choose, every agent needs:

- Structured intervention points where humans can inspect the decision pipeline

- A stop/override mechanism (the regulation literally says "stop button or similar procedure")

- Confidence thresholds that route uncertain decisions to human review

- Escalation paths with defined criteria

- A complete, immutable audit trail of all actions

The appropriate pattern depends on risk level, autonomy, and context per Article 14(2). Document your choice and the reasoning behind it. That documentation itself is part of compliance.

How do the AI Act and GDPR interact?

If your AI agent processes personal data of EU residents, you are subject to both regimes. They complement each other in some areas and create tension in others. Financial-services teams should also read our DORA compliance guide for AI in financial services, which treats AI as ICT risk alongside the AI Act obligations.

| Aspect | GDPR | AI Act | Watch out for |

|---|---|---|---|

| Impact assessments | DPIA (Art. 35) | FRIA (Art. 27) | Integrate into one process |

| Special category data | Generally prohibited (Art. 9) | Allowed for bias detection (Art. 10(5)) | AI Act exception is narrow, GDPR safeguards still apply |

| Automated decisions | Right to contest (Art. 22) | Human oversight required (Art. 14) | AI Act goes further with technical requirements |

| Data minimization | Collect the minimum (Art. 5(1)(c)) | Data must be representative (Art. 10) | AI needs more data, GDPR wants less |

The practical move: run a unified DPIA + FRIA assessment. The two frameworks ask overlapping questions about risk, impact, and mitigation. Doing them separately doubles the work and introduces inconsistencies. Taylor Wessing's analysis of the interaction confirms this approach, and it is what we recommend to clients deploying agents in regulated sectors.

By the way, the AI Act does not create any new legal basis for data processing. You still need a valid GDPR basis (consent, legitimate interest, contractual necessity) for every piece of personal data your agent touches. The AI Act layers on top; it does not replace anything.

What does compliance actually cost?

The compliance industry loves vague language around costs. Here are real numbers, drawn from industry surveys and market data compiled by SQ Magazine and Future Market Insights.

| Cost item | Range | Notes |

|---|---|---|

| Self-assessment conformity (via harmonised standards) | EUR 30,000-70,000 per system | The minimum path |

| Third-party conformity assessment | EUR 50,000-150,000 per system | Required for certain Annex III categories |

| Full high-risk system deployment | EUR 200,000-500,000 initial | Plus EUR 80,000-150,000/year ongoing |

| Post-market monitoring | EUR 40,000-80,000/year | Data collection, bias detection, incident reporting |

| Employee training | EUR 1,000-5,000 per person | Compliance-specific programs |

| Large enterprise portfolio ($1B+ revenue) | $8-15 million initial | Across all high-risk AI systems |

| SME setup (50-100 employees) | EUR 200,000-280,000 initial | Plus EUR 80,000-100,000/year |

Compare those numbers to the penalty ceiling. A company with EUR 1 billion in annual turnover faces:

- Tier 1 (prohibited practices): up to EUR 70 million (7%)

- Tier 2 (high-risk obligations): up to EUR 30 million (3%)

- Tier 3 (misleading information): up to EUR 10 million (1%)

The math is straightforward. Compliance for a single high-risk system costs EUR 200,000-500,000. A Tier 2 violation for that same system could cost EUR 15 million. The return on compliance investment is hard to argue with. The other cost surprise is internal: review throughput, which we unpack in the compliance cost of 10x AI engineering.

Global AI governance spending is projected to hit $492 million in 2026 according to Gartner, with the broader enterprise AI governance market expected to grow from $2.2 billion in 2025 to over $11 billion by 2036 at a 15.8% CAGR. Companies are spending. The question is whether they are spending in the right places.

The compliance checklist

Here is the condensed checklist for teams building or deploying high-risk AI agents. Print it, pin it to your project board, or, well, save it to your compliance wiki. Each item maps to a specific Article.

Risk classification and assessment

- Determine whether your AI agent falls under Annex III high-risk categories (Art. 6)

- Run a risk management process covering the full system lifecycle (Art. 9)

- Document all identified risks, mitigation measures, and residual risk (Art. 9)

Data governance

- Audit training, validation, and test data for representativeness and bias (Art. 10)

- Implement bias detection and mitigation measures (Art. 10)

- If processing special category data for bias detection, document the GDPR safeguards (Art. 10(5))

Technical documentation

- Prepare all 9 sections of Annex IV documentation before market placement (Art. 11)

- Version all documentation and update with every system change (Art. 11)

Logging

- Implement automatic event recording across the agent's full operation (Art. 12)

- Log prompts, responses, tool calls, confidence scores, model versions, and knowledge sources

- Ensure logs are immutable, centralized, and retained for 6+ months (Art. 12)

Transparency

- Provide deployers with clear instructions on capabilities, limitations, and accuracy (Art. 13)

- Document known failure modes and performance boundaries (Art. 13)

Human oversight

- Choose and document an oversight pattern (HITL, HOTL, or human-over-the-loop) (Art. 14)

- Implement a stop/override mechanism (Art. 14)

- Set confidence thresholds for human escalation (Art. 14)

- Design the system so overseers can detect automation bias (Art. 14)

GDPR integration

- Run a unified DPIA + FRIA assessment (GDPR Art. 35 + AI Act Art. 27)

- Confirm a valid legal basis for all personal data processing (GDPR Art. 6)

Monitoring and maintenance

- Establish a post-market monitoring plan (Art. 72)

- Budget EUR 40,000-80,000/year for ongoing monitoring per system

- Track emerging harmonised standards (ISO/IEC 42001, prEN 18229-1, ISO/IEC DIS 24970)

What should you do this week?

The compliance checklist is useful, but checklists gather dust without momentum. So here are three things you can do before Friday.

First, classify your AI agents. Walk through Annex III's eight categories and tag each agent as high-risk, limited risk, or minimal risk. If 40% of enterprise systems have unclear classifications (per the appliedAI study), your organization probably has a few ambiguous ones too. Get them classified. The rest of the process depends on it.

Second, start logging everything. Even if you are unsure about the final schema, begin capturing prompts, responses, tool calls, and model versions today. Retrofitting logging into a production agent is far harder than building it in from the start. OpenTelemetry is free, vendor-neutral, and well-documented. Set up the pipeline now and refine the schema later.

Third, assign an owner. Compliance work that belongs to "the team" belongs to nobody. Designate a person (or hire one, since 65% of organizations plan to upskill employees for AI governance roles by 2026) who owns the compliance workstream, reports to leadership, and has the authority to block a deployment that is not ready.

The August 2026 deadline may shift to December 2027. It may not. Either way, the 20-item checklist above represents work that will need to get done eventually. The companies that start now will have compliant systems. The companies that wait will have lawyers.

How BrightByte approaches AI agent compliance

We build AI agents for regulated industries, specifically fintech and compliance-heavy sectors. Every agent we ship includes Article 12 logging, human oversight interfaces, and the technical documentation the Act requires. For the full body of work, see our compliance playbook. If you are staring at the checklist above and thinking "we need help," reach out for a compliance architecture review.

Sources: EU AI Act full text, European Parliament Digital Omnibus position, Addleshaw Goddard Digital Omnibus analysis, Taylor Wessing AI Act + GDPR analysis, SQ Magazine compliance cost statistics, Future Market Insights governance market report, Article 99 penalties, Annex III categories, appliedAI enterprise study, Gartner AI governance forecast