A Kiteworks survey from 2025 found that 97% of organizations reporting AI-related breaches had no proper AI access controls in place. Across the industry, 72% of organizations already run AI in production, yet only 9% have mature AI governance. This article covers the architecture you need to close that gap: what to log, how to store it, and how to prove it has not been tampered with.

The Compliance Problem with AI Agents Is Structural

Traditional software systems log requests and responses. A user clicks a button, the server processes the action, a line gets written to a log file. Straightforward.

AI agents break this model.

A single agent action can span multiple LLM calls, tool invocations, database queries, and external API requests. Each step involves a different model version, a different confidence score, a different set of input features. The agent might access patient records in one tool call and write a summary in the next. Between those two steps, the model might have hallucinated a drug interaction that never existed.

So when a regulator asks "what did your AI system do with this patient's data on March 14th," you need more than a log line. You need a complete trace that captures every decision the agent made, every piece of data it touched, every model version and parameter configuration involved.

That trace is your audit trail. And if you are operating in healthcare, financial services, or anywhere in the EU, three regulations dictate exactly what it must contain.

Three Regulations, One Architecture Problem

HIPAA, GDPR, and DORA come from different regulatory traditions and protect different things. HIPAA protects health information. GDPR protects personal data and individual rights. DORA protects financial system stability. But all three converge on a single technical requirement: you must be able to reconstruct what your system did, to whom, and why.

Here is how their specific demands compare:

| Requirement | HIPAA (45 CFR 164.312) | GDPR (Articles 5, 22, 30) | DORA (Articles 17-19, 28+) |

|---|---|---|---|

| What triggers logging | Any access to ePHI | Any processing of personal data | Any ICT-related incident or change |

| Identity tracking | Unique user ID per person accessing ePHI; AI agents need unique IDs too | Controller must demonstrate who processed data and when | Full chain of ICT subcontractors documented |

| Explainability | Log what was accessed and outcome | "Meaningful information about the logic involved" in automated decisions (Art. 13, 14, 15) | Root cause analysis for major incidents |

| Retention period | 6 years minimum (45 CFR 164.530(j)) | As long as necessary for purpose; no fixed floor | Aligned to supervisory reporting; testing records every 3 years |

| Tamper evidence | Required (tamper-evident, immutable format) | Implicit under accountability principle (Art. 5(2)) | Required for change management trails |

| Encryption | AES-256 at rest, TLS 1.2+ in transit (mandatory after 2025 NPRM) | "Appropriate technical measures" (Art. 32) | ICT security policies must cover encryption |

| Breach reporting | Within 60 days to HHS | Within 72 hours to supervisory authority | Three-stage: initial (hours), intermediate, final with root cause |

| Third-party vendors | Business Associate Agreements required | Data Processing Agreements under Art. 28 | Register of all ICT third-party arrangements; audit rights mandatory |

| AI-specific rules | AI agents must be in asset inventory; risk analysis must mention AI | Automated decision-making rights under Art. 22; right to human intervention | AI model failures classifiable as ICT incidents |

A few things stand out here.

HIPAA is the most prescriptive about what to log. The regulation spells out authentication events, ePHI data actions (create, read, update, delete), and administrative activities like privilege escalations. If your AI agent edits a clinical note, the log must capture who authorized the agent, which records it accessed, and the result. The January 2025 proposed rule update goes further: it eliminates the old distinction between "required" and "addressable" safeguards, making encryption mandatory with minimal exceptions. That rule is expected to become final around May 2026, with a 180-day compliance window.

GDPR is the most demanding about why you logged it and what you can explain back to the data subject. Articles 13(2)(f), 14(2)(g), and 15(1)(h) require "meaningful information about the logic involved" in automated decisions. This does not mean you have to expose your model weights or prompts. It means you must be able to explain the method: what input features influenced the decision, how the model version was selected, what the confidence score was. SHAP values or counterfactual explanations ("if your income had been X, the result would have been Y") satisfy this requirement.

DORA is the most demanding about what happens after something goes wrong. Article 19 requires a three-stage incident reporting process: initial notification within hours of classifying an incident as major, intermediate reports when circumstances change, and a final report with root cause analysis. For AI systems, this means your audit trail must support rapid investigation, which is the argument for SIEM integration rather than just archival storage. We unpack the fintech-specific obligations in our DORA compliance guide for AI in financial services.

The cumulative GDPR fines since 2018 now exceed EUR 7.1 billion. Healthcare breaches cost $7.42 million on average in 2025 according to IBM, with containment taking 279 days. These are not hypothetical risks.

What Your Audit Log Entry Should Actually Contain

I have seen teams approach audit logging by tacking on a few extra fields to their existing application logs. That works until the first audit, where you discover that nobody captured the model version, or the system prompt hash, or which specific records the agent accessed.

Here is a JSON schema for a complete AI agent audit record. This covers HIPAA, GDPR, and DORA simultaneously. (I debated whether to include the performance fields here since they are more of an engineering concern than a compliance one, but auditors have asked about cost and latency in enough assessments that I keep them in.)

{

"event_id": "uuid-v7",

"timestamp": "2026-04-15T14:32:01.123Z",

"trace_id": "abc-123-def",

"span_id": "span-456",

"session_id": "session-789",

"identity": {

"agent_id": "agent-compliance-v2",

"agent_version": "2.3.1",

"user_id": "user-12345",

"user_role": "compliance_officer",

"authorization_method": "oauth2",

"ip_address": "10.0.1.42",

"device_fingerprint": "hash-xyz"

},

"model": {

"provider": "openai",

"model_name": "gpt-4o",

"model_version": "2026-01-15",

"temperature": 0.1,

"top_p": 0.95,

"max_tokens": 4096,

"system_prompt_version": "v3.2.0",

"system_prompt_hash": "sha256:abc123..."

},

"request": {

"input_hash": "sha256:def456...",

"input_tokens": 1523,

"input_content_type": "text",

"pii_detected": false,

"phi_detected": false,

"data_classification": "confidential"

},

"response": {

"output_hash": "sha256:ghi789...",

"output_tokens": 892,

"confidence_score": 0.94,

"hallucination_flag": false,

"eval_scores": {

"relevance": 0.92,

"faithfulness": 0.97,

"safety": 1.0

}

},

"tools": [

{

"tool_name": "database_query",

"tool_version": "1.2.0",

"inputs_hash": "sha256:...",

"outputs_hash": "sha256:...",

"records_accessed": 3,

"target_objects": ["patient-record-456", "patient-record-789"],

"duration_ms": 234

}

],

"compliance": {

"regulation": ["HIPAA", "GDPR"],

"lawful_basis": "legitimate_interest",

"consent_id": "consent-abc-123",

"dpia_reference": "DPIA-2026-003",

"data_residency": "eu-west-1",

"retention_policy": "6_years"

},

"chain_integrity": {

"previous_hash": "sha256:previous...",

"current_hash": "sha256:current...",

"merkle_root": "sha256:root..."

}

}

A few things worth calling out:

Hashes instead of raw content. The input_hash and output_hash fields store SHA-256 digests of the actual prompt and response, not the raw text. Raw content goes into a separate encrypted table with restricted access. This keeps the main audit table queryable without exposing sensitive data to everyone who needs audit access.

The chain_integrity block. Each record includes the hash of the previous record. This creates a hash chain where modifying any historical entry changes all subsequent hashes, making tampering detectable. More on this in the architecture section below.

The tools array. This is where HIPAA compliance lives or dies for AI agents. If your agent calls a database query tool that returns patient records, you need to log exactly which records it accessed (target_objects) and how many (records_accessed). "The agent queried the database" is not enough.

system_prompt_hash. GDPR's right to explanation does not require disclosing your actual prompt. But you do need to prove which version of the prompt was active when a decision was made. Hashing the system prompt and versioning it solves this.

Immutable by Design: The Architecture

A log stored in a writable database with access controls is not tamper-evident. An administrator with enough privileges can still modify it without detection. Compliance-grade audit trails require architectural immutability.

The core pattern is hash chaining combined with tiered storage:

[AI Agent] --> [Log Ingestion API] --> [Batching + Hashing]

|

v

[Merkle Tree]

|

+-------------+-------------+

| | |

v v v

Hot Storage Warm Storage Cold Storage

(PostgreSQL/ (Kafka (S3/GCS

TimescaleDB) immutable WORM policy)

topics)

|

v

[Merkle Root Anchor]

(blockchain tx or

timestamping authority)

Hash chaining means each new log entry incorporates the SHA-256 digest of the previous entry. Changing any record in history invalidates all successor hashes. This is the same principle behind blockchain, just applied to your audit log without the consensus overhead.

Merkle trees let you batch records and verify integrity in O(log n) time instead of O(n). You compute a Merkle root for each batch and anchor it to an external tamper-proof store. During an audit, you can prove any individual record was part of an unmodified batch by providing the Merkle proof.

Storage tiers match access patterns to cost:

| Tier | Technology | Purpose | Retention |

|---|---|---|---|

| Hot | PostgreSQL/TimescaleDB, append-only | Real-time queries, dashboards | 30-90 days |

| Warm | Apache Kafka, immutable topics | Stream processing, SIEM feed | 1 year |

| Cold | S3/GCS with WORM (Write Once Read Many) policy | Long-term compliance archive | 6-10 years |

| Anchor | Blockchain or RFC 3161 timestamp authority | Tamper-proof root hash verification | Permanent |

The database layer enforces append-only semantics at the SQL level:

REVOKE DELETE, UPDATE ON audit_events FROM ALL;

This is table-level enforcement. Even a database administrator cannot modify records through normal SQL operations. Combined with WORM storage for cold archives and periodic Merkle root anchoring, you get a system where the cost of undetectable tampering becomes prohibitively high.

One architectural decision that trips teams up: where to store raw prompts and responses. My recommendation is a separate audit_content table with AES-256 encryption and references to your KMS for key management. The main audit_events table stores only hashes. This separation means your compliance officers can query audit patterns (who accessed what, when, with what outcome) without being able to read the actual content, and your security team can investigate specific incidents by decrypting individual entries with proper authorization.

SIEM Integration: From Archive to Detection

Storing audit logs and querying them during an annual audit is the minimum. But DORA's incident reporting timelines, initial notification within hours, demand real-time detection.

The architecture for this uses Kafka as the central nervous system:

AI Agents --> Structured Logs (JSON) --> Apache Kafka (immutable topics)

|

+----------+----------+

| | |

v v v

SIEM Feed Cold Storage Real-time

(Splunk/ (S3 WORM) Dashboard

Sentinel/ (Grafana)

Elastic)

The SIEM layer enables detection patterns that static log analysis cannot:

- Injection campaigns: patterns of denied access attempts across multiple records from a single agent or session.

- Data exfiltration: anomalous output sizes or unusual query volumes from one agent.

- Model drift: sudden changes in confidence scores across a population of requests, which can indicate the model is behaving differently than expected.

- Privilege escalation: attempts to access data or tools outside the agent's assigned scope.

A SIEM-integrated audit trail compresses the detection window for governance failures from weeks to minutes. That is the difference between catching an incident within DORA's reporting timeline and explaining to your regulator why you missed it.

For the Kafka layer, use Avro with a Schema Registry for schema enforcement and evolution. As your audit schema changes over time (and it will, every time a new regulation or internal policy shifts the requirements), backward-compatible schema evolution means old consumers keep working while new fields get added.

RBAC: Who Gets to See the Audit Trail

The audit trail itself contains sensitive data. Access to it must be controlled and, yes, logged. Here is the RBAC model we use:

| Role | Permissions | Scope |

|---|---|---|

| System Admin | Configure log infrastructure, manage retention policies | Infrastructure only; cannot read log content |

| Security Officer | Full read access, export, alert configuration | All logs |

| Compliance Officer | Read access, generate reports, export | Filtered by regulation tag |

| Data Protection Officer | Read access for GDPR-related logs | GDPR-tagged events only |

| AI Engineer | Read own agent logs, debug traces | Own agent/project scope |

| External Auditor | Time-limited read access, integrity verification | Defined audit scope with expiration |

| AI Agent (service account) | Write-only append access | Own events only |

The critical principle: even administrators with full infrastructure access must not be able to modify or delete audit logs. WORM storage, separate credentials, and cryptographic verification enforce this at the architectural level, not just the policy level.

This separation sometimes frustrates engineering teams. I have had conversations where a developer wants to "clean up" noisy log entries from a buggy deployment. The answer is always no. You append a correction record, you never modify or delete existing entries. The noisy records from the buggy deployment are themselves audit-relevant data: they document what happened during an incident.

Tools for AI Agent Audit Trails

I want to be specific about what exists today, because the tooling landscape for AI audit trails is genuinely fragmented. Most teams end up combining two or three tools.

Langfuse (open-source, MIT license, self-hostable) is the strongest option for the observability layer. It captures every LLM call, retrieval step, tool execution, and custom logic with timing, inputs, outputs, and metadata. The Enterprise Edition adds immutable audit logs and exportable audit records. Self-hosting within your VPC solves GDPR data residency concerns. The gap: Langfuse does not provide hash chaining, WORM storage, or SIEM integration out of the box. You build those around it.

FireTail (commercial SaaS) takes a different approach. It automatically captures every interaction with AI models, user identity, timestamps, model metadata, prompt and response metadata, and centralizes it into an audit trail with pre-built policies for OWASP Top 10 for LLMs, MITRE ATLAS, and NIST AI RMF. It also handles GDPR and CCPA requirements like the right to delete personal data while retaining audit metadata. The trade-off is that it is a SaaS product, so you are trusting a third party with your audit data.

Zenity (acquired by Microsoft in 2024) focuses on runtime agent security. It breaks AI agent interactions into granular steps, flags suspicious behavior like prompt injection or data leaks, and generates audit trails mapped to OWASP, NIST, and PCI-DSS. Forrester included Zenity in its "AI Governance Solutions Landscape, Q2 2025" report, and Gartner listed it as a representative vendor in the 2025 Market Guide for AI TRiSM. The focus here is more on security monitoring than compliance record-keeping, so it complements rather than replaces a purpose-built audit architecture.

Galileo AI addresses evaluation and automated gatekeeping. Its "Composite Metrics" combine multiple quality scores, and if an agent score drops below threshold, the system can kill the session or flag a human before the LLM responds. This is useful for the GDPR "right to human intervention" requirement under Article 22(3): you can demonstrate that automated decisions were subject to quality gates.

OpenTelemetry deserves a mention as the emerging standard for trace linkability across AI agent systems, especially for MCP (Model Context Protocol) implementations. It is not a compliance tool by itself, but it provides the traceid and spanid infrastructure that makes end-to-end audit trails possible across distributed agent architectures.

A common mistake I see: teams adopt one of these tools and assume compliance is handled. It is not. Langfuse gives you observability. FireTail gives you policy enforcement. Zenity gives you runtime security. You still need the immutable storage layer, the hash chaining, the SIEM integration, and the RBAC controls described above.

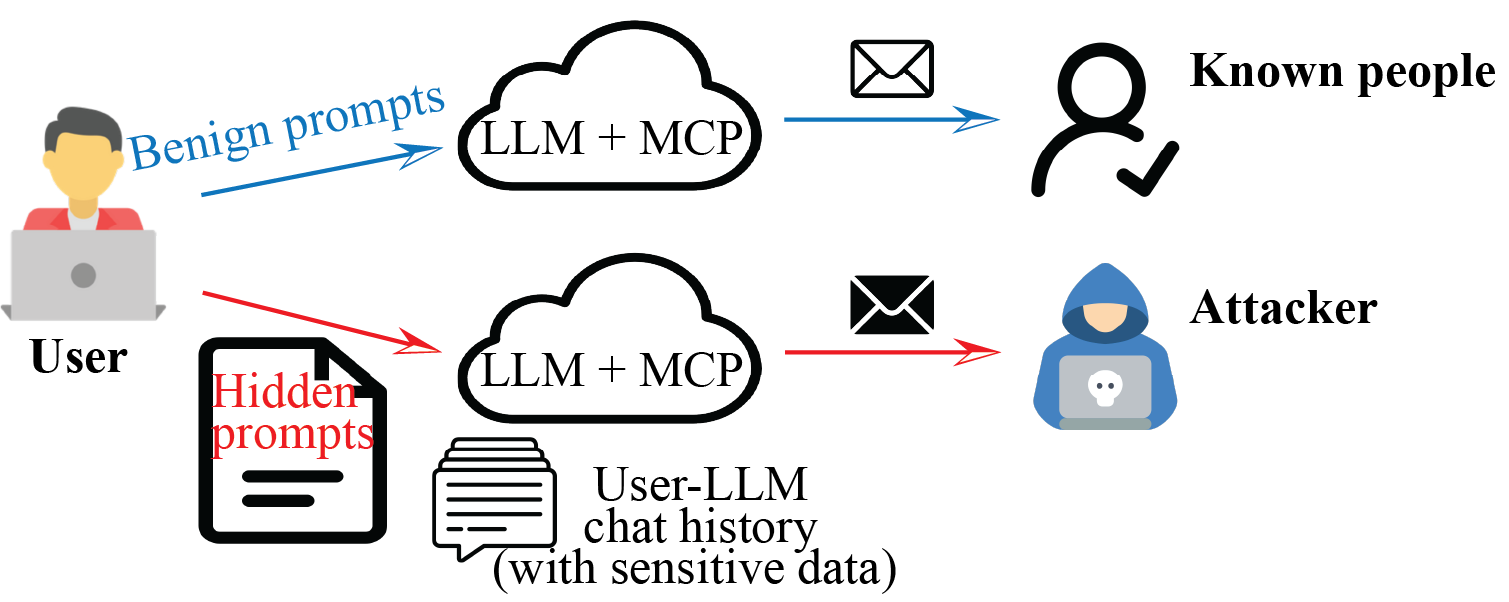

The MCP Problem

Model Context Protocol is becoming the standard way AI agents interact with external tools and data sources. This introduces a compliance gap that many teams have not thought through.

Built-in MCP logs are session-specific. When an MCP session ends, the detailed interaction log disappears with it. That is fine for debugging. It is not fine for compliance, where you need end-to-end traceability across sessions, agents, and months or years of retention.

The emerging solution is an MCP gateway that sits between your agents and MCP servers, capturing every tool call in your centralized audit trail. MintMCP is one example: a SOC 2 Type II compliant gateway that adds OAuth protection and audit trails to MCP connections. The architecture principle is the same as an API gateway. You do not log at the application level alone; you log at the infrastructure level where it cannot be bypassed.

This matters because regulators do not care about your internal architecture. They care whether you can produce a complete record of what happened. If an AI agent accessed a patient database through an MCP tool call and that call was not logged because it happened in a session-specific MCP channel, that is a compliance failure regardless of how good your application-level logging is.

Implementation Checklist

This maps specific implementation tasks to the regulations they satisfy. Some items satisfy more than one.

Storage and immutability:

- Append-only tables with

REVOKE DELETE, UPDATE(HIPAA, DORA) - WORM policy on cold storage buckets (HIPAA, DORA)

- Hash chaining with SHA-256 on every log entry (HIPAA, DORA)

- Periodic Merkle root anchoring to external tamper-proof store (HIPAA, DORA)

- AES-256 encryption for all stored ePHI and personal data (HIPAA, GDPR)

- TLS 1.2+ for all data in transit (HIPAA, GDPR)

- 6-year minimum retention with automated lifecycle policies (HIPAA)

Identity and access:

- Unique identifier for every AI agent in every log entry (HIPAA, GDPR)

- Human authorizer tracked alongside agent identity (HIPAA, GDPR, DORA)

- RBAC on audit trail access with role-scoped queries (HIPAA, GDPR, DORA)

- MFA for all systems accessing ePHI, including audit trail dashboards (HIPAA)

- Service account write-only access for agents (HIPAA, DORA)

Content and explainability:

- Model version and system prompt hash per log entry (GDPR)

- Input feature importance or SHAP values for automated decisions (GDPR Art. 22)

- Lawful basis tagged per processing activity (GDPR Art. 5)

- Consent ID linked to each data processing event (GDPR)

- DPIA reference for high-risk AI processing (GDPR)

- Hallucination detection scores (GDPR, internal governance)

Detection and incident response:

- SIEM integration with structured log format, CEF or LEEF compatible (DORA)

- Real-time alerting for anomalous agent behavior (DORA Art. 17)

- Three-stage incident report generation capability (DORA Art. 19)

- Complete third-party vendor register with audit rights documented (DORA Art. 28+)

- Annual vulnerability assessments with documented results (DORA)

Data subject rights:

- Ability to delete personal data while retaining anonymized audit metadata (GDPR Art. 17)

- Ability to export all audit records related to a specific data subject (GDPR Art. 15, 20)

- Mechanism to record and act on consent withdrawal (GDPR Art. 7)

Where Most Teams Get Stuck

In my experience working on compliance architectures for AI systems, the technical implementation is rarely the hard part. PostgreSQL supports append-only semantics. S3 supports WORM. Kafka supports immutable topics. The tooling exists.

The hard part is organizational.

Engineering teams build agents fast and add logging as an afterthought, if at all. Compliance teams write policies that describe what should be logged but lack the technical specificity to be implemented directly. The gap between "we need audit trails" and "here is the JSON schema, the storage architecture, and the RBAC model" is where most organizations stall.

The EU AI Act's transparency provisions take effect August 2, 2026. The proposed HIPAA Security Rule update is expected to become final around the same time. DORA has been in full force since January 17, 2025. The regulatory window for treating AI audit trails as a future problem is closing. For the AI Act side specifically, the EU AI Act compliance checklist for AI agents maps the same logging requirements to Articles 9-14.

If you are building AI agents for regulated industries today, the audit trail is not a feature you add later. It is part of the architecture from day one. Start with the JSON schema above, implement the storage tiers, integrate with your SIEM, and test the whole chain by running a mock audit before a real one finds the gaps for you. For the wider control story under SOC 2, see our SOC 2 field guide for AI coding agents, and browse the full compliance playbook for adjacent regimes.

Daniella Mitchell writes about compliance engineering, AI governance, and the intersection of regulation and software architecture for The BrightByte blog.