January 20, 2026. A Chinese AI lab nobody heard of released a model. Five days later, Nvidia lost $600 billion in market value. Marc Andreessen called it "AI's Sputnik moment."

That lab was DeepSeek. And the AI industry hasn't been the same since.

Key findings from the 2025-2026 AI model landscape:

- DeepSeek trained a GPT-4-class model for $6M — roughly 1/17th of what OpenAI reportedly spent on GPT-4

- API prices crashed over 90% in twelve months; DeepSeek charges $0.27/M tokens vs GPT-4o's $5.00

- Open source models (Llama 4, Qwen 3, Mixtral) now match proprietary models on most standard benchmarks

- Three companies — OpenAI, Anthropic, and xAI — raised $86.3 billion in 2025, accounting for 38% of all AI funding

The $6 Million Disruption

DeepSeek claimed they trained their V3 model for $6 million. For context: OpenAI reportedly spent $100 million on GPT-4. Meta used approximately ten times more compute for their comparable Llama 3.1.

The numbers don't lie. DeepSeek's API launched at $0.56 per million tokens—20 times cheaper than GPT-4o at the time. OpenAI and Google scrambled to slash prices. The compute moat that was supposed to protect Big Tech? DeepSeek put a serious crack in it. Whether it's fully shattered depends on how you define frontier — training a GPT-4-class model cheaply is different from training whatever comes after GPT-5.

By January 27, DeepSeek's app topped the iOS App Store in the US, beating ChatGPT. A free chatbot from China, running on weaker chips, matching OpenAI's reasoning capabilities.

Here's what happened: DeepSeek proved that algorithmic efficiency beats brute-force hardware. The economic barrier to frontier AI? Much lower than Silicon Valley wanted you to believe.

The Current Price Wars

Let's talk money. Here's what you're paying per million tokens in January 2026:

| Provider | Model | Input | Output | Notes |

|---|---|---|---|---|

| DeepSeek | V3/R1 | $0.27 | $1.10 | Best value, MIT license |

| xAI | Grok 4.1 | $0.20 | $0.50 | Cheapest major provider |

| Gemini 2.5 Pro | $1.25 | $10.00 | Middle ground | |

| OpenAI | GPT-4o | $5.00 | $15.00 | Premium pricing |

| Anthropic | Claude Sonnet 4 | $3.00 | $15.00 | Strong coding |

| Anthropic | Claude Opus 4 | $15.00 | $75.00 | Most expensive |

The math is brutal. What makes the pricing gap particularly painful is that benchmark differences between the cheap and expensive tiers have shrunk to single-digit percentages on most standard evaluations. A complex task costing $15 with GPT-5 costs roughly $0.50 with DeepSeek. That's a 97% cost reduction for comparable quality on most benchmarks.

We saw this firsthand. One of our compliance clients was running document summarization through GPT-4 at roughly $2,100 a month. In February we helped them switch to DeepSeek V3 for the same workload. Quality dipped maybe 3-4% on our internal evals — slightly less precise section headers, occasional formatting inconsistencies. Cost dropped to $127 a month. Nobody on their legal team noticed the quality change. The CFO noticed the savings immediately.

The Big Money Moves

While prices crashed, valuations exploded. The disconnect is fascinating.

Anthropic's trajectory was the most dramatic. They reached a $350 billion valuation on the back of investments that read like a bidding war: Microsoft put in $5 billion, Nvidia dropped $10 billion, Google added another billion on top of their existing $2 billion stake, and Amazon's total hit $8 billion. The price tag? Anthropic committed to $30 billion in Azure compute purchases from Microsoft. Call it an investment if you want. I'd call it a customer acquisition cost with extra steps.

OpenAI pivoted toward hardware, paying $6.5 billion for Jony Ive's startup. When your API margins are getting crushed by a Chinese lab charging pennies per token, owning the device layer starts looking attractive.

Nvidia and Google played defense in different ways. Nvidia cut a $20 billion deal with Groq and hired their key engineers — a diversification move after losing $600 billion in market cap in a single week. Google went infrastructure, spending $32 billion on Wiz for AI-driven cloud security. Different strategies, same motivation: hedge against the commodity pricing that DeepSeek unleashed.

The total? OpenAI, Anthropic, and xAI raised $86.3 billion in 2026—38% of all AI funding. In Q4 alone, their $46 billion represented over half the quarter's total investment.

Open Source Caught Up

Here's the part that should worry enterprise vendors: open source models aren't playing catch-up anymore. They're winning.

Qwen (Alibaba) became the most downloaded model family by year's end. Their Qwen3 series uses MoE architecture, exceeds 1 trillion parameters, supports 119 languages, and hits 92.3% on AIME25 math benchmarks. License: permissive commercial use.

Llama 4 (Meta) launched with Scout and Maverick variants. Scout offers up to 10 million token context windows. The ecosystem around Llama is enormous — over 4,200 adapters and fine-tunes on HuggingFace alone, plus dozens of inference frameworks that treat it as a first-class citizen.

Mistral keeps shipping. Their Mixtral uses 8 expert networks, activates 2 per token, achieving 6x faster inference than comparably-sized dense models. Apache 2.0 license—fully permissive.

DeepSeek open-sourced everything. V3 and R1 are MIT licensed. You can run them locally, fine-tune them, sell products built on them. No API dependency.

The practical result? For most use cases, you don't need proprietary models anymore.

| Use Case | Best Open Source Option |

|---|---|

| General chat | Llama 3.3 70B or Qwen 2.5 72B |

| Coding | DeepSeek Coder V2 |

| Long context (RAG) | Qwen 2.5 or Llama 3.1 (128k window) |

| Multilingual | Qwen (119 languages) |

| Speed-critical | Mixtral 8x22B |

What the Giants Released

The proprietary labs shipped real capability upgrades in 2025, but here's the thing — the gap between their models and open source alternatives narrowed rather than widened.

OpenAI released GPT-5 at mid-year, then iterated to GPT-5.2 by December with Instant, Thinking, and Pro variants. Long-context support and agent workflows are meaningfully better than GPT-4. Still expensive, and the reasoning tokens add up fast.

Google's Gemini line moved faster than expected. Gemini 2.5 Pro with Deep Think mode, followed by Gemini 3 Pro in November, posted 87.2% on ARC-AGI-2 — up from 72% six months prior. Their Antigravity environment for autonomous tasks is the most ambitious agent playground anyone's shipped so far.

Anthropic's Claude 4.5 family (Haiku, Sonnet, Opus) landed between September and November. The extended thinking mode — where the model argues with itself before answering — produces noticeably better results on complex reasoning tasks, though it burns through tokens at a rate that makes finance teams nervous. Meta rounded out the year with Llama 4's native multimodal capabilities and a 10-million-token context window that no other open model comes close to matching.

The Industry Alliance Nobody Expected

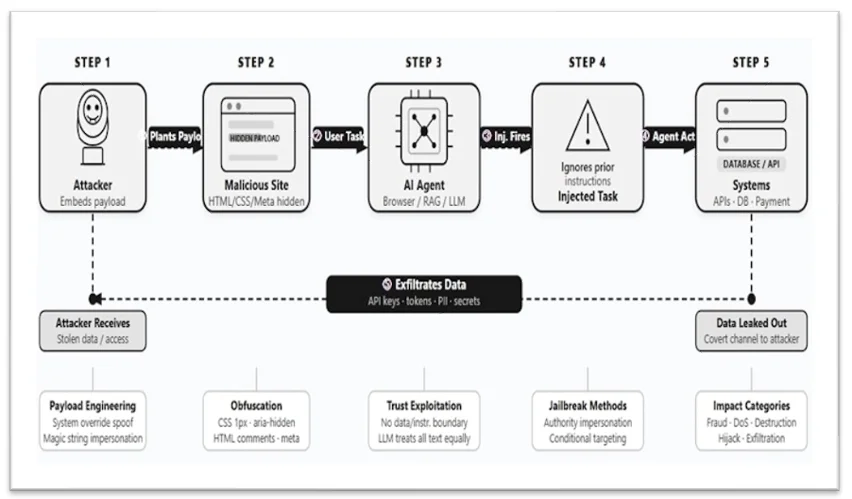

In a move that surprised everyone, Microsoft, Google, OpenAI, and Anthropic formed the Agentic AI Foundation. Backed by The Linux Foundation.

The goal: open source standards for AI agents. Companies that compete brutally on models are cooperating on infrastructure. Why? Because agent interoperability benefits everyone—or at least, benefits the companies that control the agents.

Cynical take: they're trying to establish standards before open source projects do. Whoever sets the standards controls the ecosystem.

What This Means For Your Budget

Here's the practical takeaway. The AI model market in 2025 split into three tiers:

Tier 1: Commodity tasks (content generation, summarization, basic Q&A)

- Use: DeepSeek V3, Gemini Flash, open source models

- Cost: $0.20-1.00 per million tokens

- Quality: 90% of what you need

Tier 2: Professional tasks (code review, complex analysis, customer-facing)

- Use: GPT-4o, Claude Sonnet, Gemini Pro

- Cost: $3-15 per million tokens

- Quality: Better reliability, fewer hallucinations

Tier 3: Mission-critical tasks (legal documents, medical, financial analysis)

- Use: Claude Opus, GPT-4 Turbo, human review

- Cost: $15-75 per million tokens

- Quality: Maximum accuracy, audit trails

The mistake most companies make: using Tier 3 pricing for Tier 1 tasks. You're burning money. The mistake we see most often — and we've made it ourselves — is defaulting to the most capable model for every task because switching costs feel higher than the savings justify, which is almost never true once you run the numbers.

The Uncomfortable Truth

The AI market in 2026 proved something uncomfortable: training frontier models isn't as expensive as Big Tech claimed. I'd argue the moat was always more about marketing than mathematics — but I'm aware that's a convenient narrative for anyone not spending billions on compute.

DeepSeek showed you can match GPT-4 performance with $6 million and smart engineering. Open source models caught up to proprietary ones. Prices crashed 90% in twelve months.

For enterprises, this is good news. You have options now. Real options, not just "which expensive API should we lock ourselves into."

But here's the catch: the investment frenzy didn't slow down. $350 billion valuation for Anthropic. $86 billion raised by three companies. $40 billion data center acquisitions.

Someone's going to lose big money. The question is whether it's the investors betting on proprietary lock-in, or the companies betting on commoditized AI.

My money's on the second group being right. Then again, I was equally confident about crypto's inevitable takeover in 2021, so calibrate accordingly.

What To Do Now

If you're starting an AI project:

- Default to open source or cheap APIs for prototypes

- Benchmark DeepSeek, Qwen, and Llama against your use case

- Only upgrade to expensive models if you hit quality walls

If you're already paying for AI APIs:

- Audit your usage by task type

- Move commodity tasks to cheaper providers

- Calculate how much you'd save with a tiered approach

If you're building AI products:

- Don't depend on single vendor pricing

- Abstract your model layer for easy switching

- Watch the open source space — new models drop weekly and the performance gaps keep shrinking

The AI models war isn't over. But the pricing war already has a winner: anyone buying AI services in 2026 pays a fraction of what they would have in 2025.

Take advantage of it while it lasts.

Sources: Pricing data from official API documentation as of January 2026. Funding figures from CB Insights State of AI 2026. Benchmark scores from published model cards and ARC-AGI leaderboard. Company announcements sourced from press releases and SEC filings.