The Digital Operational Resilience Act became enforceable in January 2025, and by end of that year, only half the financial sector had reached full compliance. Now regulators are shifting from paperwork to proof. If your institution runs AI for fraud detection, KYC, credit scoring, or transaction monitoring, here is what you need to know about passing an audit in 2026.

Why DORA Matters for AI (and Why Most Teams Are Getting It Wrong)

The $38 billion AI-in-finance market is growing at 30.6% annually and projected to reach $190 billion by 2030 (MarketsandMarkets). Banks are spending over $53 billion on AI this year alone. And 85% of financial institutions expect to be running AI across multiple functions by end of 2026.

The problem: most of these AI deployments were built for accuracy, speed, or cost savings. Almost none were built for operational resilience.

DORA (Regulation EU 2022/2554) is the EU's answer to that gap. It applies to at least 22,000 organizations across 20 types of financial entities, plus their ICT service providers. And here is the part that catches AI teams off guard: DORA does not treat AI as a special category. It treats AI as ICT. Full stop. Every requirement that applies to your core banking platform applies to your GPT-4 integration.

BaFin made this explicit in December 2025 when it published a 35-page guidance document on ICT risks in AI use. It was the first national authority to formally position AI systems within the DORA framework. The guidance is technically non-binding, but supervisors across Europe are already using it as a benchmark.

So when your compliance officer asks whether your AI chatbot or fraud detection model falls under DORA, the answer is yes. The more useful question is: which parts of DORA hit AI systems the hardest?

The Five Pillars, Through an AI Lens

DORA rests on five pillars. Each one creates specific obligations for AI-dependent financial institutions. I will walk through the ones that matter most for AI teams, because frankly, Pillar 5 (information sharing) is straightforward, and you can read the regulation text for that.

Pillar 1: ICT Risk Management (Articles 5-16)

Article 5 requires the management body to define, approve, and oversee the ICT risk management framework. Members must "actively keep up to date with sufficient knowledge and skills to understand and assess ICT risk." That includes AI risk. Your board needs to understand what model drift is, why hallucination rates matter, and how adversarial inputs work. Not at a PhD level, but enough to ask the right questions.

Article 7 requires you to identify, classify, and document all ICT assets. For AI systems, that means every model, every LLM integration, every ML pipeline, and every data flow. If you are using three different OpenAI endpoints for three different use cases, each one is a separate ICT asset with its own risk profile.

Article 9 demands continuous monitoring and control. For AI, that translates to drift detection, output validation, confidence score tracking, and anomaly alerting. A model that quietly degrades over six months is an unmanaged ICT risk.

Article 12 is arguably the most impactful for AI. It requires tamper-proof, encrypted logging with a minimum 5-year retention period and clock synchronization across all systems. For AI specifically: every inference, every input/output pair, every model version, every confidence score must be logged with an audit trail. I have talked to compliance teams who assumed their existing application logs were sufficient. They were not even close.

Pillar 2: Incident Reporting (Articles 17-19)

This is where AI teams tend to panic, and for good reason.

Article 17 requires you to detect, manage, and notify ICT-related incidents with early warning indicators. Article 18 provides classification criteria based on number of affected clients, reputational impact, duration, data losses, and economic impact.

Article 19 sets the reporting timeline:

| Report | Deadline | What to Include |

|---|---|---|

| Initial notification | 4 hours after classification as major (no later than 24 hours after detection) | Basic facts, initial classification |

| Intermediate report | 72 hours after initial notification | Updated analysis, impact assessment |

| Final report | 1 month after latest intermediate report | Root cause analysis, remediation measures |

The 4-hour window is the one that keeps CTO offices up at night. Consider this scenario: your AI fraud detection model drifts because customer spending patterns shifted after a holiday season. The model starts generating a 95% false positive rate. Customers get their transactions blocked. By the time a human analyst spots the pattern in a morning review, you may already be past the detection window.

You need automated model performance monitoring with predefined thresholds that trigger incident classification. Manual review cycles are too slow for DORA timelines.

And to be clear about what qualifies: model drift affecting a critical function is a major ICT incident. An AI hallucination in a KYC review that reaches a customer decision is a major ICT incident. Data poisoning is a major ICT-related incident. Bias detection showing discriminatory outcomes triggers both incident reporting and regulatory notification.

Pillar 3: Resilience Testing (Articles 24-27)

All financial entities must conduct annual testing of systems supporting critical functions. That testing list under Article 25 includes vulnerability assessments, source code reviews, scenario-based tests, performance testing, and penetration testing.

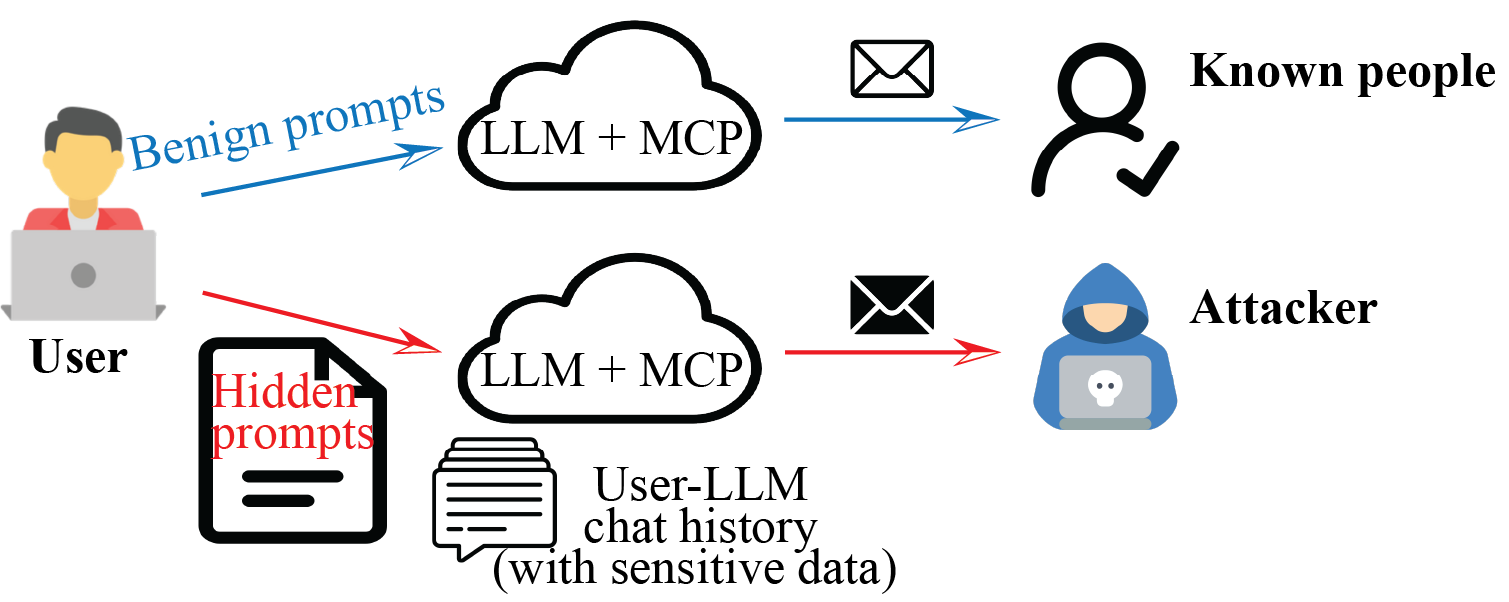

For AI, annual penetration testing must cover adversarial input testing (prompt injection, jailbreaking, data manipulation), data leakage testing (training data extraction, PII exposure), and API security testing.

Larger institutions face an additional requirement: Threat-Led Penetration Testing (TLPT) every three years under Article 26, performed on live production systems. This is no longer voluntary under DORA. Red team exercises must specifically target AI decision systems, including supply chain attacks through AI vendor dependencies, model extraction attempts, and data poisoning scenarios.

Pillar 4: Third-Party Risk (Articles 28-44)

This pillar is where the regulation meets the reality of how most AI systems are actually built in 2026.

Article 28 states it plainly: financial entities remain fully responsible even when outsourcing to AI vendors. Using an LLM API without proper contractual arrangements is a DORA violation. And the Register of Information (RoI) deadline has already passed. By April 2025, financial entities had to report all ICT vendor contracts to national competent authorities.

Article 29 addresses concentration risk. If your KYC, AML, fraud detection, and credit scoring all depend on GPT-4 via the OpenAI API, that is textbook concentration risk under DORA. The regulation asks whether you are contracting with a provider that is "not easily substitutable." LLM APIs, with their model-specific behaviors, fine-tuning, and deep integration patterns, are exactly that.

Article 30 mandates specific contract terms. For AI vendors, your contracts must include:

- Quantitative SLAs: uptime (99.95%+), inference latency (<500ms), accuracy baselines

- Unrestricted audit rights for critical functions, including ability to inspect model behavior and training data governance

- Exit strategies with transition periods of 6-12 months and data portability in standard formats

- Incident notification requirements specific to AI failures

- EU data residency provisions

- Subcontracting controls with approval rights over model training subcontractors

Try getting those terms into an OpenAI Enterprise agreement. It is possible, but it requires negotiation that most procurement teams are not prepared for.

The 19 Critical Third-Party Providers (and Who Is Missing)

On November 18, 2025, the European Supervisory Authorities designated the first 19 Critical ICT Third-Party Providers (CTPPs) under Article 32. The list includes AWS, Google Cloud, Microsoft, IBM, Oracle, SAP, and several IT infrastructure firms. CTPPs face direct ESA oversight and fines of up to EUR 5 million plus 1% of average daily global turnover per day of non-compliance, for up to six months.

OpenAI and Anthropic are not on the list yet. The CTPP designation is updated annually, and as financial institutions deepen their reliance on LLM APIs for core functions, these providers will likely meet the criteria. BaFin's December 2025 guidance already names "Foundation Model Providers (e.g., OpenAI, Anthropic, Google)" as ICT third-party providers requiring DORA-compliant contracts. The formal CTPP designation just adds direct ESA oversight on top.

The practical implication: even without CTPP status, your contracts with LLM providers must comply with Articles 28-30 today. Not next year. Today.

Which AI Use Cases Trigger DORA Requirements?

Not every AI use case carries the same regulatory weight. Here is how common financial AI applications map to DORA obligations:

| AI Use Case | DORA Classification | Key DORA Articles | Also EU AI Act High-Risk? |

|---|---|---|---|

| Credit scoring | Critical function | Art. 5-12, 17-19, 25-27, 28-30 | Yes (Annex III, Category 5b) |

| Fraud detection | Critical function | Art. 5-12, 17-19, 25-27 | No (explicit exception) |

| KYC/AML screening | Critical function | Art. 5-12, 17-19, 25-27, 28-30 | Yes (if profiling individuals) |

| Algorithmic trading | Critical function | Art. 5-12, 17-19, 25-27 | Subject to MiFID II + DORA |

| Transaction monitoring | Critical function | Art. 5-12, 17-19, 25-27 | Depends on implementation |

| Customer chatbot | Important function (if handling account info) | Art. 5-12, 28-30 | Generally no |

| Internal document search | Supporting function | Art. 5-9, 28-30 | No |

| Insurance pricing | Critical function | Art. 5-12, 17-19, 25-27, 28-30 | Yes (life and health) |

The distinction matters because critical functions trigger the full DORA stack: TLPT, detailed SLAs, unrestricted audit rights, exit strategies, and the 4-hour reporting window.

The Dual Compliance Problem: DORA Meets the EU AI Act

Financial institutions deploying AI face overlapping regulatory pressure. DORA became applicable in January 2025. The EU AI Act's high-risk AI obligations become enforceable on August 2, 2026, just a few months from now.

| Aspect | DORA | EU AI Act |

|---|---|---|

| Focus | Operational resilience, ICT risk | AI safety, fundamental rights |

| Risk management | ICT risk framework (Art. 6) | AI risk management system (Art. 9) |

| Testing | Penetration testing, TLPT | Conformity assessments, bias testing |

| Logging | 5-year retention, tamper-proof | Automatic event logging throughout lifecycle |

| Transparency | Incident reporting to regulators | Explainability to individuals and regulators |

| Penalties | Up to 2% global turnover or EUR 10M | Up to 3% global turnover or EUR 15M |

Credit scoring AI is a good example of where both regulations collide. The EU AI Act classifies it as high-risk under Annex III, requiring explainability, bias testing, data governance documentation, and human oversight. DORA adds ICT risk management, incident reporting, resilience testing, and third-party oversight on top. Your credit scoring model needs to be explainable, auditable, resilient, continuously monitored, and contractually controlled. That is a lot of infrastructure for a single model. We walked through the Articles 9-14 side of that stack in the EU AI Act compliance checklist for AI agents.

The European Commission recognized this overlap and introduced the Digital Omnibus proposal in November 2025, aiming to consolidate reporting under DORA, the AI Act, NIS2, and GDPR into a single incident reporting point. This simplification is welcome, but it is still in the legislative process. Plan for full dual compliance now, and treat any simplification as a future bonus.

What BaFin's December 2025 Guidance Actually Says

I keep referencing this document because it is the most concrete, practical guidance any national authority has published on AI under DORA. A few key points worth highlighting.

AI strategy is mandatory (sort of). If AI supports critical or important functions, BaFin expects a formulated AI strategy, either standalone or integrated into your IT/DORA strategy. It must clarify why AI is being used, what risks are acceptable, and what resource planning looks like. The guidance is non-binding, but a BaFin examiner asking to see your AI strategy and finding nothing is going to write that up.

Lifecycle management. BaFin expects governance across the entire AI lifecycle: development, testing, ongoing operation, and decommissioning. Model retraining triggers, version control policies, and deprecation procedures all need to be documented.

Generative AI gets extra scrutiny. The guidance applies heightened requirements for generative AI and LLM systems. These must be fully embedded into existing ICT governance, testing, and third-party risk frameworks. BaFin is not saying you cannot use ChatGPT. It is saying that if you do, it needs the same level of governance as your core banking system.

Building an AI System That Survives the Audit

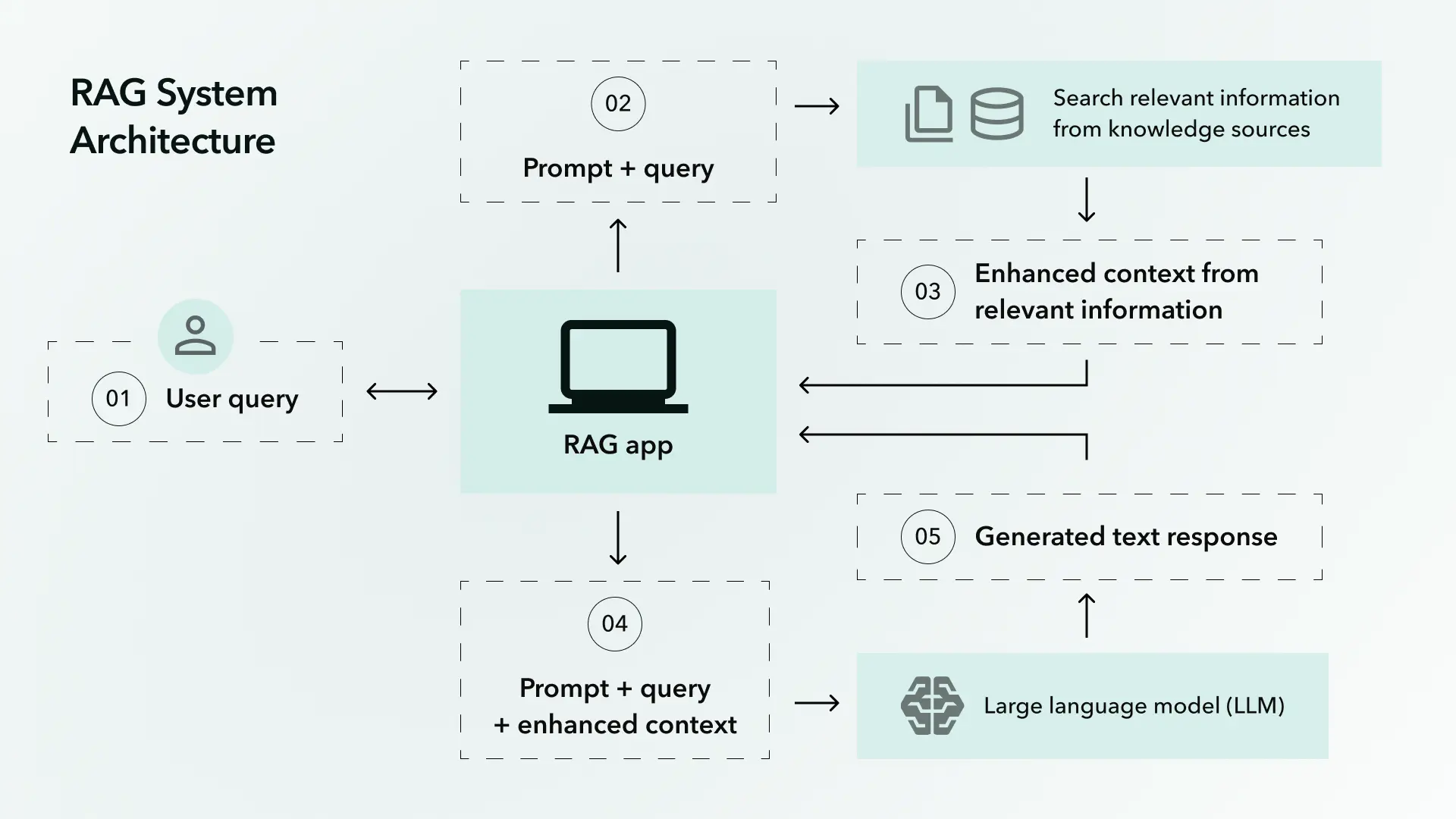

Based on the regulation text and BaFin's guidance, here is what a DORA-compliant AI architecture actually requires.

Logging That Meets Article 12

This is the foundation. Every AI inference must capture:

- Input data (sanitized of raw PII where possible, but traceable)

- Output prediction or decision

- Model version and configuration

- Confidence score

- Timestamp from a synchronized clock source

- User or system that triggered the inference

All of this stored for a minimum of 5 years, encrypted, tamper-proof, with access controls and an audit trail showing who accessed the logs. Clock synchronization is required across all ICT systems. I have seen teams overlook this last point. If your AI system runs on AWS and your logging runs on Azure, their clocks need to agree. For the deeper schema and tamper-evidence patterns, see our AI audit trail architecture guide.

Model Monitoring That Enables 4-Hour Reporting

You cannot report an incident in 4 hours if you do not detect it for 3 days. Required monitoring includes:

- Drift detection: Statistical tests comparing production data distributions against training data

- Performance baselines: Accuracy, precision, recall, and F1 tracked against predefined thresholds

- Anomaly detection: Unusual patterns in input data, output distributions, or confidence scores

- Automated alerting: Thresholds that trigger incident classification procedures without waiting for human review

Third-Party Contracts That Pass Scrutiny

For every AI vendor (LLM provider, ML platform, data provider), your contract file should include:

- Clear description of AI services, model types, use cases, and known limitations

- SLAs with quantitative targets, not vague uptime promises

- Incident notification clauses requiring the vendor to report AI-specific failures within defined timeframes

- Audit rights, including on-site inspection for critical functions

- Exit strategy with data export formats, transition period, and vendor cooperation obligations

- EU data processing and storage requirements

Business Continuity for AI Failures

Article 11 requires a Business Impact Analysis assessing the impact of AI system failure. Practical requirements include:

- Defined Recovery Time Objectives (RTOs) and Recovery Point Objectives (RPOs) for each AI system

- Fallback mechanisms for every AI-dependent critical function (rules-based systems, manual processes, alternative providers)

- Documented model versioning with ability to roll back to last known good version

- Annual testing of continuity plans, including vendor failover scenarios

The Compliance Costs Are Real

According to Deloitte data, only 50% of institutions reached full DORA compliance by end of 2025. Another 38% pushed their compliance targets into 2026. Most institutions estimate DORA compliance costs between EUR 2-5 million. Large financial groups report total DORA program spend approaching EUR 100 million.

These numbers track with what I am hearing from compliance teams. The 39% of entities dedicating 5-7 full-time employees to DORA compliance are the ones making progress. The ones who tried to bolt DORA onto existing GRC processes with a two-person team are the ones scrambling now.

And 70% of institutions expect permanently higher running costs for IT and control functions. This is not a one-time compliance project. DORA creates ongoing obligations: annual testing, continuous monitoring, quarterly vendor reviews, incident response drills.

For AI specifically, the costs compound. You need ML engineers who understand compliance, compliance officers who understand ML, and infrastructure that supports both. That intersection of skills is expensive and scarce.

What This Looks Like in Practice

Let me walk through a concrete scenario. A mid-size European bank uses an AI model for transaction monitoring, sourced through an LLM API provider. Here is their compliance surface:

Risk management (Pillar 1): The model is inventoried as a critical ICT asset. Its risk profile is documented, including dependencies on the API provider, training data characteristics, known failure modes. Internal audit has staff qualified to assess AI model risk. Monitoring dashboards track drift, accuracy, and latency in real time.

Incident reporting (Pillar 2): Automated alerting detects when the model's false positive rate exceeds the predefined threshold. The system classifies the anomaly, and within 4 hours, the compliance team files an initial notification with their NCA. At 72 hours, they submit an intermediate report with impact analysis. At one month, the final report includes root cause (seasonal spending pattern shift) and remediation (model retrained on updated data, monitoring threshold adjusted).

Resilience testing (Pillar 3): The AI model undergoes annual penetration testing, including adversarial input scenarios and data leakage tests. Every three years, an external red team runs TLPT on the live production system, specifically targeting the AI decision layer.

Third-party management (Pillar 4): The contract with the LLM provider includes SLAs for inference latency and uptime, audit rights, an exit strategy with a 9-month transition period, and incident notification obligations. The bank maintains a tested fallback to a secondary model provider.

This is not hypothetical perfection. Dutch banks like ING, ABN AMRO, and Rabobank were among the first to submit their Register of Information in April 2025. German banks operating under BaFin's AI guidance are building exactly these architectures. The compliance infrastructure exists. It just requires deliberate engineering.

A Checklist for Your Next Board Meeting

If you are a CTO or compliance officer presenting AI governance to your board, these are the questions regulators will ask:

- Is every AI system inventoried in your ICT asset register with a criticality classification?

- Can you produce a tamper-proof audit trail for any AI decision made in the last 5 years?

- How fast can you detect and classify an AI model failure? Is it under 4 hours?

- Do your contracts with LLM providers include audit rights, SLAs, and exit strategies per Article 30?

- Have you assessed concentration risk across your AI vendor portfolio per Article 29?

- When was the last penetration test of your AI systems, and did it include adversarial inputs?

- What is your documented fallback if your primary AI provider goes down for 48 hours?

- Do you have an AI strategy document, as BaFin recommends, that your board has reviewed and approved?

If you cannot answer all eight, you have compliance gaps. The grace period is over. 2026 is when regulators expect to see functioning systems, not project plans. More on adjacent regimes in our compliance playbook.

Daniella Mitchell writes about the intersection of AI systems and regulatory compliance. She has been tracking DORA since its 2022 enactment and covers EU digital regulation for The BrightByte blog.